01 You Already Use AI

You might think AI is something new, something complicated, something that does not apply to you. But you have been using it for years. Every time you pick up your phone, AI is working in the background.

Predictive text

Your phone suggests the next word as you type. That is AI learning your writing patterns.

Spam filters

Your email decides what is junk and what is not. It checks patterns against known spam.

Voice assistants

Siri, Alexa, and Google Assistant understand your voice and answer questions.

Recommendations

Netflix suggests films. Spotify picks songs. They compare your choices to millions of other users.

Navigation

Google Maps reroutes you around traffic in real time using live data from other drivers.

Spell check

Grammar and spelling suggestions in Word, Outlook, and your browser are all AI powered.

None of these felt scary when you first used them. AI is simply software that learns from patterns in data and uses those patterns to do something useful. That is it.

Predictive text uses a language model trained on millions of messages. It builds a probability map of which words typically follow other words, then weights it with your personal typing history.

Spam filters use a combination of rule-based checks (known spam phrases, suspicious links) and machine learning that improves as users mark emails as spam or not spam.

Voice assistants convert your speech to text (speech recognition), process the meaning (natural language understanding), find an answer, and convert it back to speech (text-to-speech). Four AI systems working in sequence.

Recommendation engines use collaborative filtering: they find users whose behaviour is similar to yours and suggest things those users liked that you have not tried yet.

Navigation AI processes real-time GPS data from millions of phones, historical traffic patterns, and road incident reports to calculate the fastest route and update it continuously.

Spell check tools have evolved from simple dictionary matching to contextual AI that understands grammar, tone, and even intent. Modern tools like Grammarly use large language models.

02 Types of AI You Already Know

AI is not one thing. It is a family of technologies, each designed to do something different. Here are the main types, explained in plain English.

Reactive AI

These tools follow fixed rules to respond to specific situations. Your spam filter is reactive AI: it checks incoming emails against patterns and decides "junk" or "not junk." It does not learn about you personally; it just follows its training.

Predictive AI

These tools spot patterns in large amounts of data and make predictions. When Netflix suggests a film you might enjoy, it is comparing your viewing history against millions of other users. Some local authorities use predictive AI for demand forecasting in social care services.

Conversational AI

These tools understand and respond to human language. Siri, Alexa, and the chatbots on council websites are conversational AI. They can answer questions and follow simple instructions, but they are working from a script, not thinking for themselves.

Generative AI

This is the newest type and the one getting all the attention. Tools like ChatGPT, Microsoft Copilot, and Google Gemini can create new text, images, and code. They work by predicting what word should come next, based on patterns in the data they were trained on. They do not understand what they produce.

Reactive AI follows simple if/then rules. Your email inbox does this all day long: IF this message matches known spam patterns, THEN move it to your junk folder. IF it does not match, THEN send it to your inbox. It cannot learn or adapt on its own. Think of it as a very detailed instruction sheet written by humans.

Predictive AI spots patterns and makes educated guesses. At its most basic level, this is like Grammarly or predictive text on your phone. If you type "the cat sat on the," the next prediction is highly likely to be "mat" because the model has seen that pattern thousands of times. More advanced versions predict things like which customers might cancel a subscription or which patients are at higher risk.

Conversational AI is the chatbot you deal with before you get through to a real person. When you call a helpline or use live chat, a bot will search its knowledge base to try to answer your question before transferring you to a human. It is designed for structured back and forth, not open ended content creation.

Generative AI is generative for a reason: it creates new content each time. Ask ChatGPT the same question twice and you might get a similar answer, a slightly different one, or something completely different. That is because it is predicting the most likely next word each time, and small changes in probability can take the response in a new direction.

Want to understand the technical detail behind each type? That is covered in the Intermediate section.

03 So What is Generative AI?

Generative AI is the type that creates new content. When you type a question into ChatGPT and it writes a response, that is generative AI at work.

Here is what it actually does: it has been trained on enormous amounts of text from the internet (books, articles, websites, forums). From all of that, it has learned patterns about how language works. When you ask it something, it predicts the most likely next word, then the next, then the next, until it has built a complete response.

It does not think. It does not understand. It does not have opinions or feelings. It is a very sophisticated pattern matching system.

This is important for social care because it means generative AI can produce text that sounds confident and professional but is completely wrong. In our sector, wrong information can have serious consequences.

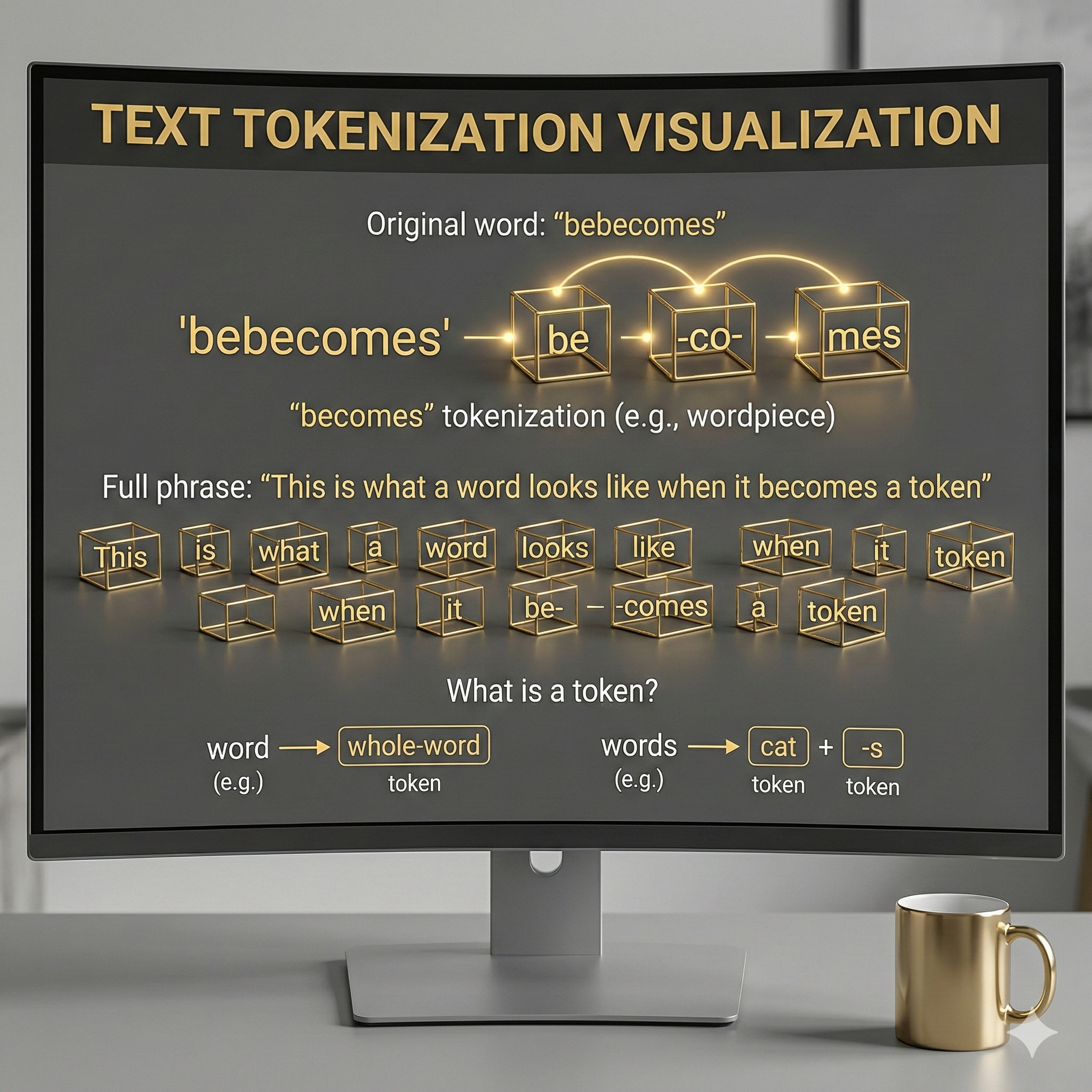

Tokens: AI does not read words the way you do. It chops text into small chunks called tokens. Sometimes a token is a whole word, sometimes it is part of a word. The word "becomes" might be split into "be," "co," and "mes." Short common words like "the" or "cat" usually stay whole.

Why does this matter? Because the AI is not reading sentences the way you are right now. It is processing a long sequence of these chunks, one after another, working out which chunk is most likely to come next.

Why answers differ each time: Generative AI has a "temperature" setting that controls how adventurous it is. Think of it like a dial. Turn it low and it always picks the safest, most predictable next word. Turn it high and it takes more chances, which is why the same question can give you a slightly different answer, or sometimes a completely different one.

Why it gets things wrong: Because it is predicting likely text, not looking up facts. If the most probable next word creates a sentence that sounds confident and professional but is completely false, the AI has no way of knowing. It has no concept of "true" or "false," only "what word probably comes next." In social care, this is why professional judgement must always stay with the practitioner.

Want to understand context windows, attention mechanisms, and how tokens are weighted? That is covered in the Intermediate section.

04 In Practice: Adult Social Care

Here are two real scenarios where a practitioner might use AI as a tool to support their work. In both cases, the professional judgement stays with the social worker.

Example 1

Structuring a care plan review

A practitioner is reviewing the care plan for someone with complex needs: multiple long term conditions, several services involved, and a family who want to understand what is changing. The practitioner uses AI to help organise their notes into a clear structure with sections for each need, what is working, and what needs to change. The AI drafts the framework. The practitioner fills it with their professional knowledge, their understanding of this person, and the context that no tool can know.

Example 2

Making policy accessible

A social worker needs to explain a section of relevant legislation to a service user and their family. The original wording is dense and full of legal language. They ask AI to rewrite it in plain English at a reading level suitable for a general audience. The AI produces a first draft. The practitioner checks it for accuracy, adds the detail that matters for this person's situation, and removes anything that could be misleading.

Care plan prompt example: "I need to structure a care plan review for a person with the following needs: [list needs]. Please create a framework with sections for: current situation, what is working well, what needs to change, and recommendations. Use plain English suitable for a multidisciplinary team meeting."

Policy simplification prompt: "Please rewrite the following section of this legislation in plain English at a reading age of 12. Keep the legal meaning accurate but remove all jargon. [Paste section]."

Key safeguard: Never paste personal data about a real service user into any AI tool unless your organisation has approved it, completed a DPIA, and confirmed the tool meets UK GDPR requirements. Use anonymised or fictional scenarios for practice.

05 In Practice: Children's Social Care

The same principles apply in children's services. AI can support the administrative side of the work while the practitioner retains full control of the analysis, judgement, and relationship.

Example 3

Preparing for a child protection conference

A social worker is preparing for an initial child protection conference. They have months of case notes, referrals, and assessment records to pull together into a coherent chronology. They use AI to help organise the timeline and structure their report. The AI sorts the information. The social worker provides the analysis: what the patterns mean, what the risks are, and what needs to happen to keep this child safe. The judgement is entirely theirs.

Example 4

Creating age-appropriate information

A young person in care needs to understand their rights and what is happening in their plan. The formal documents are written for professionals, not for a 14 year old. The social worker uses AI to draft an explanation in language that is clear, honest, and appropriate for that young person's age. The practitioner then checks: does it sound right for this child? Does it explain things without being frightening or patronising? Is it accurate?

Chronology prompt example: "I have the following case events in rough chronological order. Please organise them into a clear timeline table with columns for: date, event, source, and significance. Flag any gaps longer than 30 days. [Paste anonymised events]."

Young person's rights prompt: "Please rewrite the following information about a child's rights in care for a 14 year old. Use simple, direct language. Avoid anything that sounds frightening or clinical. The tone should be warm, honest, and empowering. [Paste text]."

Critical safeguarding note: Extra caution is needed in children's services. Never include a child's name, date of birth, school, or any identifying information in an AI prompt. Safeguarding data must stay within your organisation's approved systems at all times.

06 What Does "Open Source" Mean?

You will hear this term a lot in conversations about AI. Here is what it means.

Open source means the code behind a piece of software is publicly available. Anyone can look at it, check how it works, suggest improvements, or adapt it for their own needs.

Proprietary (closed source) means the code is kept private. You can use the tool, but you cannot see how it works inside.

Think of it like a recipe

Open source is like a recipe shared online. You can read every ingredient, see every step, adjust it to your taste, and share your version with others. Proprietary software is like a ready meal. You can eat it, but you have no idea what is in it, how it was made, or whether the ingredients suit your needs.

Why does this matter for social care? Because when your organisation uses AI tools that handle sensitive information about people's lives, you need to know what those tools are doing with that data. Open source tools allow that transparency. Proprietary tools ask you to trust the company that made them.

Neither is automatically better. What matters is that your organisation understands what it is using and has made an informed choice.

Key open source models: Meta's LLaMA, Mistral, and Stability AI's image models are among the most widely used. These can be downloaded and run on your own infrastructure, meaning data never leaves your organisation.

Key proprietary models: OpenAI's GPT-4 (ChatGPT), Anthropic's Claude, and Google's Gemini are proprietary. Your prompts are sent to their servers for processing, which raises data protection questions for social care.

The trade-off: Open source models give you control and transparency but require technical expertise to deploy. Proprietary models are easier to use but require trust in the provider's data handling practices and policies.

For social care: The decision depends on what data you are processing, your organisation's technical capacity, and whether a DPIA has been completed. Your information governance team should be involved in this decision.

07 A Glimpse Under the Bonnet

You do not need to be a data scientist to work with AI. But knowing a few key concepts will help you ask better questions, spot problems, and feel more confident.

Tokens

AI breaks text into small chunks called tokens. A token might be a whole word, part of a word, or a single character. This is how AI reads.

Training Data

The enormous datasets AI learns from: books, websites, articles. The quality and range of this data shapes what AI can and cannot do.

Hallucinations

When AI generates information that sounds plausible but is factually wrong. It predicts likely text, it does not check facts.

Prompts

The instruction you give to AI. Better prompts produce better outputs. Learning to prompt well is one of the most useful skills you can develop.

Word embeddings: AI represents words as numbers in a mathematical space (a vector). Words with similar meanings end up close together. "Doctor" and "nurse" would be near each other; "doctor" and "banana" would be far apart.

Semantic spaces: These vectors create a multi-dimensional map of meaning. AI navigates this map to find relationships between concepts. This is how it can answer questions about things it was not explicitly taught.

Clusters: Groups of related concepts naturally form clusters in the semantic space. Social care terms like "safeguarding," "wellbeing," and "risk assessment" would cluster together because they frequently appear in similar contexts.

Attention mechanisms: Transformer models (the architecture behind GPT, Claude, and Gemini) use "attention" to weigh which parts of the input are most relevant to each part of the output. This is how they maintain context over long passages of text.

Why this matters for practitioners: Understanding these concepts helps you see why AI is better at some tasks than others, why it sometimes produces irrelevant responses, and why the way you phrase your prompt makes such a difference to the output.

08 Test What You Have Learned

Before moving on, check what you have taken in. Ten questions based on the sections above. Score 7 or more and you are ready for the Intermediate section. No timer, no pressure. You can review any answers you get wrong and try again.

Where to Next?

You now have the foundations. You know what AI is, what types exist, how it shows up in social care, and what open source means. You have a sense of what sits under the bonnet.

Ready for more depth? The Intermediate section explains how AI actually learns, what happens inside the model, and how to evaluate outputs critically. It is written for someone who has finished this section and wants to go further.

Remember: every expert started as a beginner. The fact that you are reading this means you are already ahead of most.

Intermediate Level Going Deeper

You know the basics. You know AI predicts text, you know about tokens, you know hallucinations are a risk. Now let us look at how it actually works in a bit more depth. We are not going into university-level maths here, just enough to understand why AI behaves the way it does and enough to make better decisions about using it.

01 How AI Actually Learns

In the beginner section we said AI "learns from patterns." But what does that actually mean?

Think of it like this. Imagine you gave a teenager a library of a million books and said: "Read all of these, then I am going to show you half a sentence and you have to guess the next word." After reading all those books, they would get pretty good at it. Not because they understand what they are saying, but because they have seen so many patterns that they can predict what usually comes next.

That is essentially what an AI model does during training. It reads billions of pages of text and learns which words tend to follow other words, in which contexts. The result is a massive mathematical model of language patterns.

The revision analogy

Imagine revising for a history exam by reading every textbook ever written about World War II. You would start to notice patterns: certain events always get mentioned together, certain phrases keep appearing. If someone gave you the start of a sentence about D-Day, you could probably finish it convincingly, even if you did not truly understand the strategic decisions involved. That is how AI works with language. Pattern recognition, not comprehension.

Key point for practitioners: AI has no life experience, no professional judgement, and no understanding of what its words mean for real people. It is completing patterns. This is why a human must always review the output, especially in social care where words carry weight and consequences.

02 The Four Types of AI: Under the Bonnet

You met the four types in the beginner section with everyday examples. Now let us look at what makes them technically different.

Reactive AI

Operates on if/then logic. No memory between interactions. Cannot improve on its own. Think of it as a very sophisticated set of rules written by humans. Your email spam filter runs the same checks every time.

Predictive AI

Uses statistical models trained on historical data. It spots correlations and uses them to forecast outcomes. The risk: correlation is not causation. If biased data goes in, biased predictions come out.

Conversational AI

Combines natural language processing (NLP) with either rule-based dialogue or large language models. Designed for structured interaction, not open-ended creation. Think chatbots that follow a script versus ones that generate responses.

Generative AI

Uses transformer architectures (the "T" in GPT). Processes text as sequences of tokens and uses attention mechanisms to understand context. Generates responses by predicting the probability of each next token.

The important distinction: reactive and predictive AI work with fixed rules or existing data patterns. Generative AI creates new content each time. That is why the same prompt can give you a different answer tomorrow. It is also why hallucinations happen: the model is generating, not retrieving.

03 Tokens, Context Windows, and Why Length Matters

In the beginner section we explained that AI breaks text into small chunks called tokens. Now let us understand why that matters in practice.

A token is roughly three quarters of a word. The word "understanding" might become "under" + "standing." Numbers, punctuation, and unusual words often get split into more tokens than you would expect.

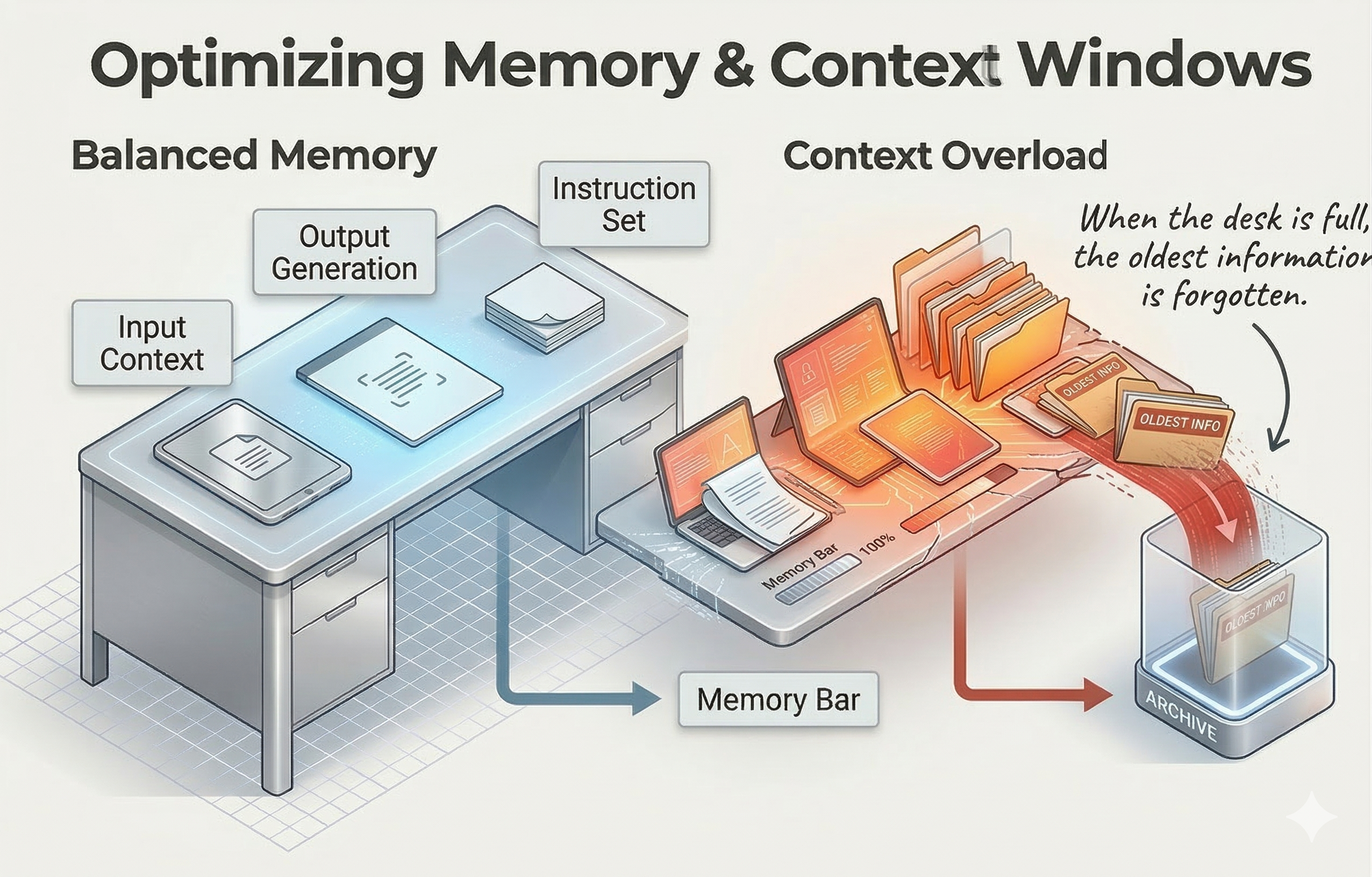

Every AI model has a context window: the maximum number of tokens it can process in one go. When people say a model has a "128k context window," they mean it can handle roughly 96,000 words at once. That sounds like a lot, but here is the catch: both your input and the AI's response share that window.

The desk analogy

Imagine your context window is a desk. Everything the AI needs to work with has to fit on that desk: your question, any background information you provide, and the space needed for the AI to write its answer. Once the desk is full, things start falling off the edges. The AI "forgets" earlier parts of the conversation. If you are pasting a long document and asking detailed questions about it, you are using up desk space quickly.

Why this matters for social care: If you paste a lengthy case chronology into an AI tool, the model may not be able to hold all of it in memory. It might miss details from the beginning of the document. This is a practical limitation, not a failure. Understanding it helps you work with AI more effectively: upload in batches rather than one long document, and break tasks into smaller, focused chunks.

04 Temperature: Why AI is Creative (or Not)

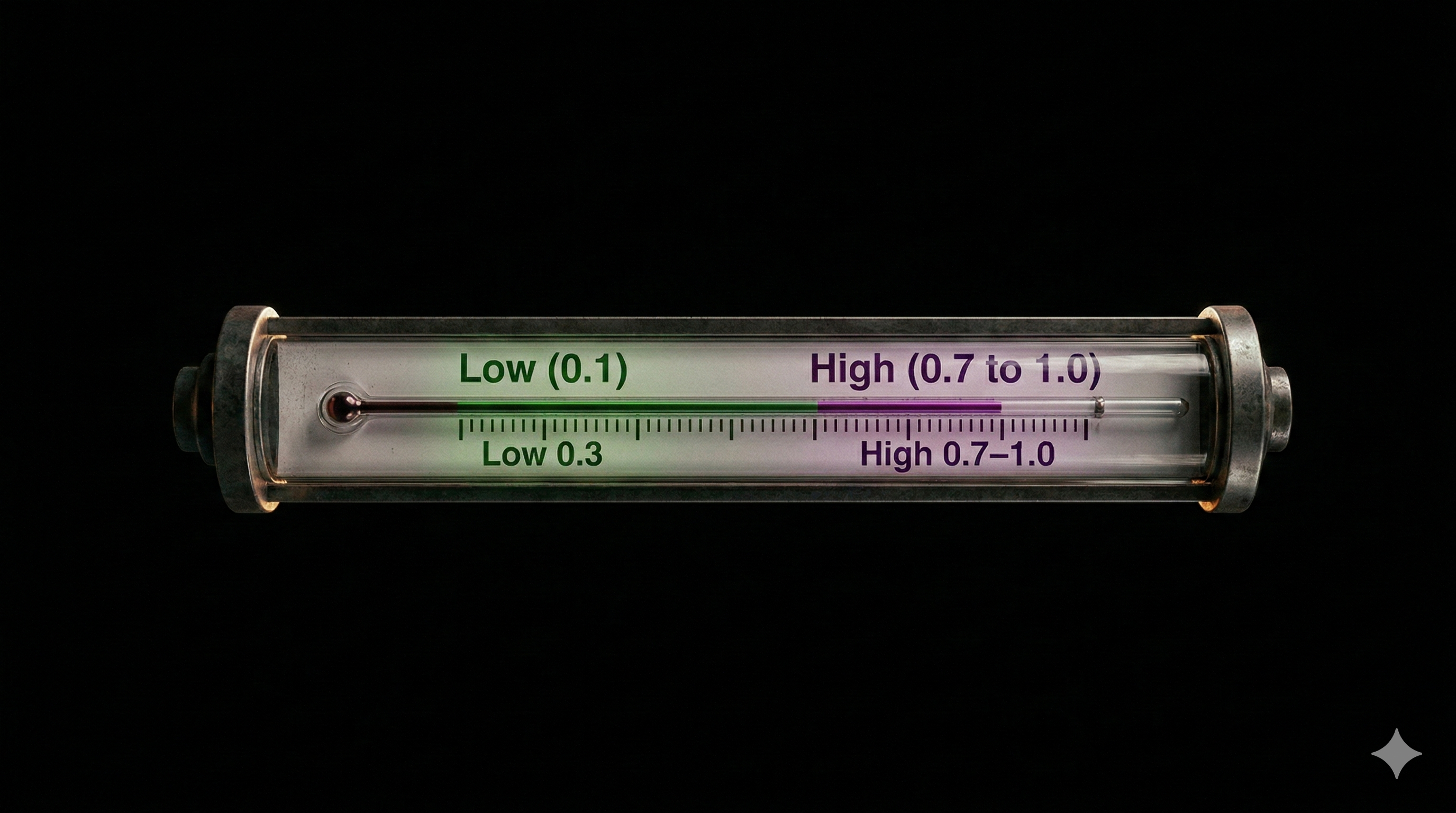

Temperature is a setting that controls how predictable or creative the AI's output is. It is a number, usually between 0 and 1.

Low temperature (0.1 to 0.3)

The model picks the most likely next word almost every time. Outputs are focused, consistent, and repetitive. Good for factual tasks: summarising a policy, extracting dates from a chronology, writing something that needs to be precise.

High temperature (0.7 to 1.0)

The model is allowed to pick less likely words. Outputs are more varied, creative, and sometimes surprising. Good for brainstorming, drafting creative content, or generating different perspectives. Also more likely to hallucinate.

Most AI tools set this for you, so you may never adjust it directly. But knowing it exists helps explain why the same prompt can give you a tightly focused answer one day and a more wide-ranging response the next.

Social care note: For any task involving factual accuracy (case notes, chronologies, policy summaries), you want low-temperature behaviour. If the tool lets you set it, keep it low. If it does not, always check outputs against the source material.

05 Why AI Hallucinates (and Why It Cannot Stop)

We covered this briefly in the beginner section. Now let us understand the mechanics, because this is the single biggest risk for social care practitioners.

For every position in a response, the AI calculates a probability for every possible next token. There are tens of thousands of options. It picks one based on those probabilities and the temperature setting. This happens hundreds of times per second.

The critical thing to understand: the model has no concept of truth. It has a concept of probability. If the most likely next word creates a false statement that sounds plausible, the model will produce it with complete confidence. It has no internal fact-checker. It does not know it is wrong.

The confident student analogy

Imagine a student who has read widely but never checked their sources. They can write fluently about almost anything and their essays sound convincing. But when you fact-check specific claims, some turn out to be wrong. The student is not lying. They genuinely think they are right because the sentence "sounded right" based on everything they have read. That is how AI hallucinates.

For social care practice: If an AI generates a date, a legal reference, a statistic, or a claimed fact, verify it. Always. No exceptions. A hallucinated date in a chronology or a made-up reference to legislation could have serious consequences for a family.

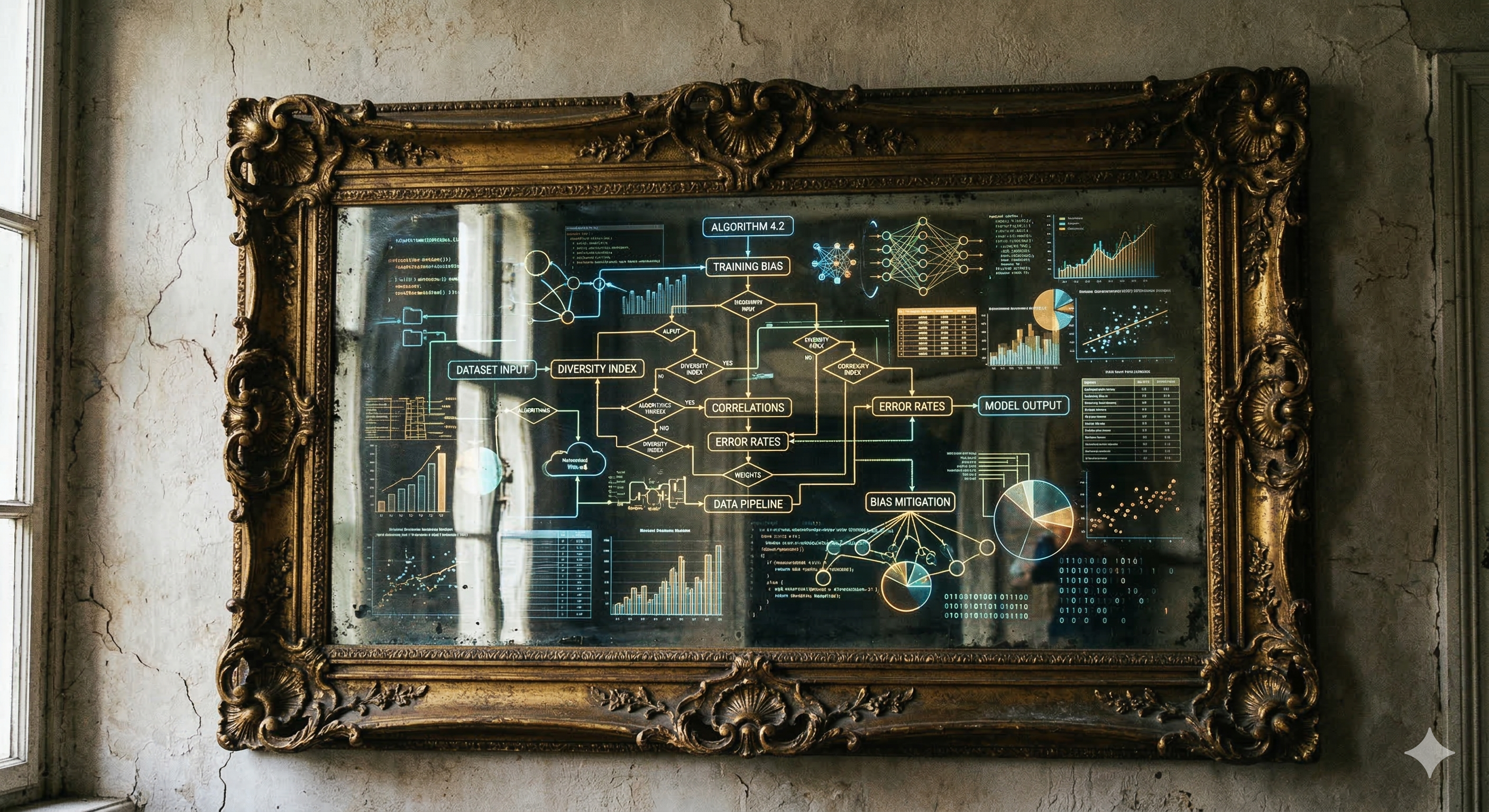

06 Bias: What Goes In Comes Out

AI learns from data created by humans. Humans have biases. Therefore AI has biases. It is that straightforward.

If the training data contains more text written about certain communities in negative terms (for example, linking poverty with poor parenting, or associating certain ethnic groups with specific risks), the model will reproduce those patterns. It does not know they are biased. It just knows they are statistically common in the text it learned from.

The mirror analogy

AI is a mirror reflecting the data it was trained on. If society's written records are biased (and they are), the mirror reflects those biases back at us. It does not add bias, but it does not correct for it either. In social care, where decisions affect people's lives and liberty, this matters enormously.

What this means in practice: If you ask AI to help draft a risk assessment and the language it produces feels like it is applying stereotypes or making assumptions based on demographics rather than evidence, that is bias at work. Your professional judgement is the safeguard. Never accept AI output that does not align with anti-oppressive practice.

07 Prompt Engineering: Getting Better Outputs

Now that you understand how AI processes language, you can see why how you ask matters as much as what you ask. This is prompt engineering: the skill of writing instructions that get the AI to produce useful, accurate, relevant responses.

Weak prompt

"Write about safeguarding."

Too vague. The AI does not know the audience, the context, the format, or the purpose. You will get generic content that is not useful for your specific situation.

Strong prompt

"Summarise the key safeguarding responsibilities under relevant legislation for social care practitioners in my sector. Use plain English, no jargon. Keep it under 200 words. Do not include any names or case examples."

Specific audience, clear format, word limit, safety boundary. The output will be much more useful.

Key principles: Be specific about your audience. State the format you want. Set boundaries (word count, what to include and exclude). Give context. If you want the AI to take a particular role ("write as if explaining to a newly qualified social worker"), say so. The more guidance you give, the better the result.

TESSA Tools' VERA:H framework (Voice & Validation, Evidence & Ethics, Reason & Responsibility, Attribution & Accountability, Human) gives you a structured approach to this. If you want to learn the full framework, check out our Framework page or book onto one of our training sessions.

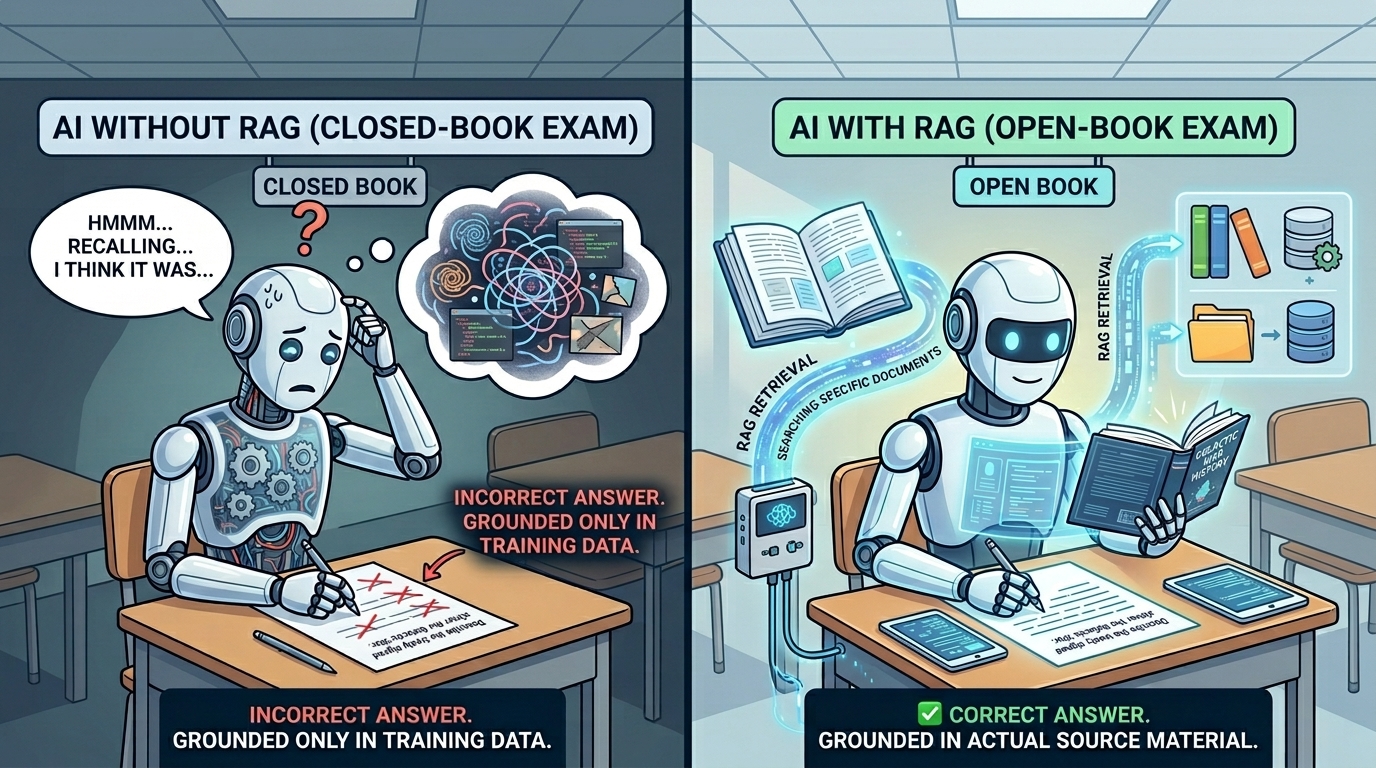

08 RAG: Teaching AI to Check Its Sources

Retrieval Augmented Generation (RAG) is one of the most important developments for making AI safer and more useful. Here is how it works in plain terms.

Normally, when you ask AI a question, it generates an answer entirely from patterns it learned during training. As we have seen, this means it can hallucinate. RAG adds an extra step: before generating an answer, the system searches a specific set of documents and retrieves relevant information. The AI then generates its response based on that retrieved information, not just its general training.

The open-book exam analogy

Without RAG, AI is doing a closed-book exam: answering from memory, which might be wrong. With RAG, it is doing an open-book exam: it can look things up in specific documents before answering. The answers are more likely to be accurate because they are grounded in actual source material.

Why this matters for social care: A RAG-based system could be pointed at your organisation's policies, relevant legislative guidance, or inspection standards. When a practitioner asks a question, the AI searches those documents first, then generates an answer based on what it found. This reduces hallucinations and keeps responses grounded in your approved content. It does not eliminate the need for human checking, but it significantly reduces the risk.

Well Done. You Have Gone Deeper.

You now understand how AI learns, why it hallucinates, what bias looks like, and how prompt engineering and RAG can make it safer. These are the concepts that separate someone who uses AI from someone who uses it well.

Ready for more? The Advanced section covers transformer architectures, governance frameworks, the EU AI Act, environmental costs, and building an organisational AI strategy.

Beyond the Basics

You understand how AI works and how to use it safely. This section zooms out. It covers where AI came from, the breakthroughs that made headlines, the environmental cost nobody talks about, and the ethical debates shaping the future. None of this is essential for your day job, but if you want to understand the wider landscape, this is where to start.

01 A Brief History of AI

AI is not new. The ideas behind it have been evolving for over seventy years. What changed recently is scale: more data, more computing power, and a breakthrough architecture called the transformer.

The dream begins

Alan Turing asks "can machines think?" Researchers coin the term "artificial intelligence" at a conference in Dartmouth. Early programmes can play checkers and prove simple maths theorems.

Expert systems and the first AI winter

Rule-based systems try to capture human expertise. They work for narrow problems but crumble with complexity. Funding dries up. This pattern of hype and disappointment repeats more than once.

Neural networks return

Inspired by how brains learn, researchers build layered networks that improve with data. Faster computers make deeper networks practical. Machine learning starts beating hand-coded rules.

Deep learning takes off

A neural network called AlexNet crushes the competition in an image recognition challenge. This moment convinces the industry that deep learning works. Investment floods in.

The transformer is born

Google publishes "Attention Is All You Need," introducing the transformer architecture. This is the engine behind every modern language model, from GPT to Gemini to Claude. It allows models to process entire passages at once rather than word by word.

ChatGPT changes everything

OpenAI releases ChatGPT (built on GPT-3.5) in November 2022. It reaches 100 million users in two months. AI moves from a research curiosity to something your colleagues are using at their desks.

Agents and autonomy

AI systems start chaining tasks together: searching the web, writing code, booking meetings, making decisions. These "agents" can act with less human oversight, which brings enormous questions about accountability and control.

Want to explore further? Search for:

"history of artificial intelligence" timeline

02 Headline Breakthroughs

A few landmark moments that help explain why AI is being taken so seriously.

AlphaGo (2016)

DeepMind's system beat the world champion at Go, a game with more possible moves than atoms in the universe. It learned by playing millions of games against itself, discovering strategies no human had ever used.

AlphaFold (2020)

Another DeepMind project that predicted the 3D structure of nearly every known protein. Scientists had spent decades on this problem. AlphaFold cracked it in months, accelerating drug discovery and biological research worldwide.

GPT-4 and multimodal AI (2023)

Language models learned to process images alongside text. You could upload a photo and ask questions about it. This opened up new possibilities, and new risks, for any field that works with documents and visual evidence.

AI agents (2024 onwards)

Systems that do not just answer questions but take actions: browsing the web, writing and running code, completing multi-step tasks. The shift from "tool you ask" to "system that acts" is where the biggest governance questions now sit.

Want to explore further? Search for:

"AlphaFold protein structure" OR "AlphaGo documentary"

03 The Black Box Problem

One of the biggest challenges in AI is that nobody fully understands how large language models arrive at their outputs. We know the maths. We can see the billions of numerical weights inside the model. But we cannot reliably trace why a model chose one word over another or made a specific recommendation.

This is called the interpretability problem, and it matters for social care because decisions about people's lives need to be explainable. If an AI-assisted tool flags a family as high risk, a practitioner needs to understand the reasoning, not just accept the output.

Why this matters for social care

UK social care legislation and professional standards require that decisions affecting people are transparent and accountable. A system that cannot explain itself is a system that cannot meet those requirements. This is why human oversight is not just best practice; it is a legal and ethical necessity.

Want to explore further? Search for:

"AI interpretability explained" OR "explainable AI social care"

04 AI Ethics: The Big Debates

The ethics conversation around AI is wide, noisy, and far from settled. Here are the key threads worth knowing about.

Bias and fairness

AI models learn from historical data. If that data reflects decades of inequality, the model will reproduce it. Hiring tools have discriminated against women. Facial recognition performs worse on darker skin. In social care, biased predictions could affect who gets support and who does not.

Alignment

How do you make sure an AI system does what you actually want, not just what you literally asked? This is the alignment problem. A system told to "reduce waiting times" might learn to reject complex cases rather than process them faster. Intent and outcome can diverge in unexpected ways.

Existential risk

Some researchers believe advanced AI could eventually pose risks to humanity if developed without adequate safety guardrails. Others think this is a distraction from the real harms AI is causing right now: job displacement, misinformation, and surveillance. Both positions have serious advocates.

Labour and exploitation

AI models are refined by human workers, often in low-income countries, who review and label harmful content for very low wages. The "magic" of AI relies on invisible human labour. Understanding this changes how we think about the real cost of these tools.

Want to explore further? Search for:

"AI ethics social care" OR "algorithmic bias UK public services"

05 The Environmental Cost of AI

This is the section most AI marketing would rather you did not see. Training and running large AI models requires an extraordinary amount of energy and water, and the numbers are growing fast.

The numbers

Training a single large language model can produce as much carbon as five cars over their entire lifetimes. Data centres now consume around 1 to 2 percent of global electricity, and AI is the fastest growing part of that demand. Many of these data centres also use millions of litres of fresh water for cooling.

Training vs. inference

Training is the one-off process of building the model. It takes weeks on thousands of specialised chips and uses enormous energy. Inference is what happens every time you send a prompt. Each query uses less energy, but multiplied by billions of queries a day, the total is vast and rising.

Compute arms race

Tech companies are building ever-larger data centres to train ever-larger models. Microsoft, Google, and Meta have all announced multi-billion-pound expansions. Some researchers question whether this race toward scale is necessary, or whether smaller, more efficient models could deliver similar value with a fraction of the environmental cost.

Worth thinking about: Every time we use an AI tool, there is a real environmental cost. That does not mean we should stop using them, but it does mean organisations should be thoughtful about when AI adds genuine value and when a simpler approach would do.

Want to explore further? Search for:

"environmental impact AI data centres" OR "carbon footprint large language models"

06 What Comes Next

The AI field moves quickly, and predicting the future is unreliable. But a few trends are worth watching.

World models

Current AI predicts text. Researchers are working on systems that build internal models of how the world works, so they can reason about cause and effect rather than just pattern-match. This is still early stage, but if it succeeds, it would change what AI can do.

Smaller, local models

Not everything needs a massive cloud-based model. Smaller models that run on a phone or a laptop are getting surprisingly capable. For social care, local models could mean better data privacy because nothing leaves the device.

Regulation

The EU AI Act is now in force, classifying AI systems by risk level. The UK is taking a lighter, sector-led approach. How regulation evolves will shape which tools are available to public services and what safeguards are required.

AI in social care specifically

We are likely to see more AI embedded in case management systems, scheduling tools, and administrative workflows. The question is not whether AI will be part of social care, but whether the sector is ready to govern it well.

Want to explore further? Search for:

"EU AI Act summary" OR "UK AI regulation 2025" OR "AI world models explained"

Keep Exploring

The AI landscape is changing every month. The best thing you can do is stay curious and stay critical. These resources will help.