A Responsible AI Framework for Social Care

Structured training and quality assurance for safe AI use. Built for practitioners, managers, and governance leads across England and the UK.

AI-generated documents can look polished and confident. But underneath the professional language, critical details can be missing: risk factors softened, complexity smoothed out, the person's real story replaced by something that reads well but says very little.

The TESSA Framework gives your staff the structured approach they need to catch those gaps. It trains them to give AI better input and then check every output against relevant legislative standards before it goes anywhere near a case file.

The result: AI that saves time without compromising quality, accountability, or professional judgement.

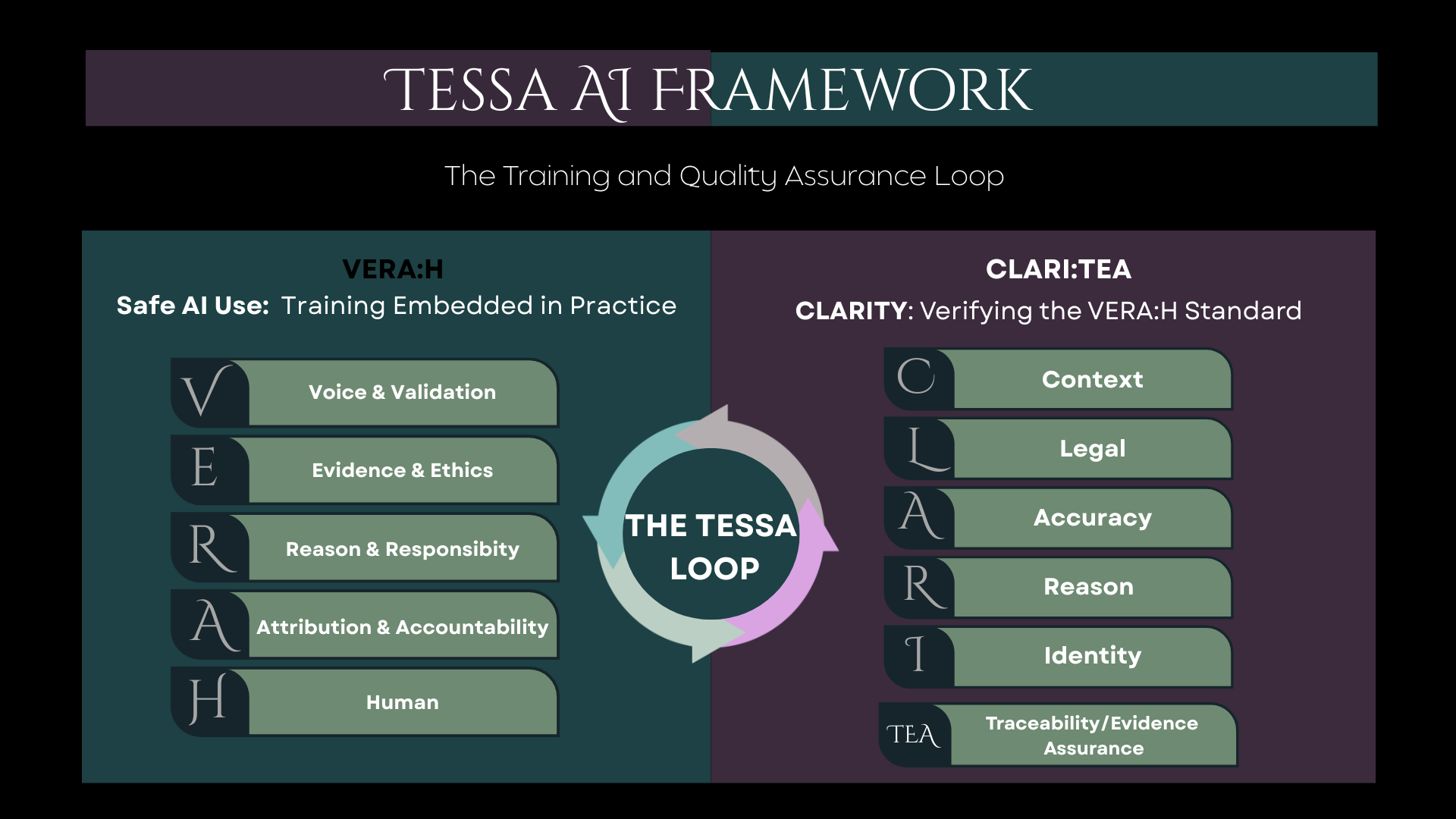

VERA:H trains your staff to give AI the right input. CLARITY checks the output meets professional standards. Together they form a cycle of safe, improving practice.

Training embedded in practice. Before the AI writes anything, the practitioner works through five structured checkpoints: whose voice, what evidence, what reasoning, who is accountable, and what only a human can do. This builds the skill into the workflow, not a separate course that staff forget by Friday.

Quality assurance after the AI writes. CLARITY reviews every output against relevant legislative domains for language, evidence, needs, and structure. It does not rewrite anything. It flags where a practitioner or supervisor needs to look again. Think of it as a second pair of eyes built into the process.

Most AI governance frameworks come from technology companies. They speak in abstractions. The TESSA Framework was designed inside social care services, by people who understand what assessments across children's and adult services need to say, and what relevant legislation requires.

It aligns with the Professional Capabilities Framework, CQC quality statements, and Social Work England professional standards. It does not ask staff to learn a new system; it slots into the way they already work.

The person's story stays in their own words. AI may draft, but the practitioner's professional reasoning and the individual's lived experience are never overwritten.

Every output has a named human behind it. The framework ensures that whoever signs off on a document has genuinely reviewed, challenged, and approved the content.

CLARITY feeds back into VERA:H. Each quality check reveals where input can improve, creating a loop that strengthens practice over time rather than a one-off training event.

See how Future Families, a Midlands fostering agency, used the TESSA framework to roll out Microsoft Copilot across 153 staff with full governance, tailored training, and measurable impact.

Find out where your organisation stands, or talk to us about rolling the framework out to your teams.